What You Should Know Before Using AI Chatbots or Health Apps

AI tools are becoming a go-to source for health advice.

A recent survey from KFF found that 1 in 6 people use AI chatbots for health questions each month. But convenience can come at a cost—especially when it comes to privacy and accuracy.

Privacy: You may be giving up more than you think

Most AI chatbots (like ChatGPT, Gemini, and Claude) and health apps (like Fitbit or symptom trackers) are not covered by HIPAA, the federal law that protects your medical records.

That means your data is not protected once you share it. It can be stored, analyzed, or shared and even sold without your knowledge or consent. Even companies that promise to hold your information confidential, can change their privacy policies at any time.

Some platforms now encourage users to upload lab results or medical records. But once that information leaves a HIPAA-protected setting, any HIPAA protections are gone. And once your data is shared, you can’t take it back.

These are serious concerns. You may recall, for example, the public outcry when 23 and Me filed for bankruptcy and people first realized that the sale of their genetic data to the highest bidder was possible. As the Center for Democracy and Technology points out

“People don’t want their data, especially sensitive data like health and genetic data, to be used and shared in ways they don’t want–or shared or sold to parties they don’t know”.

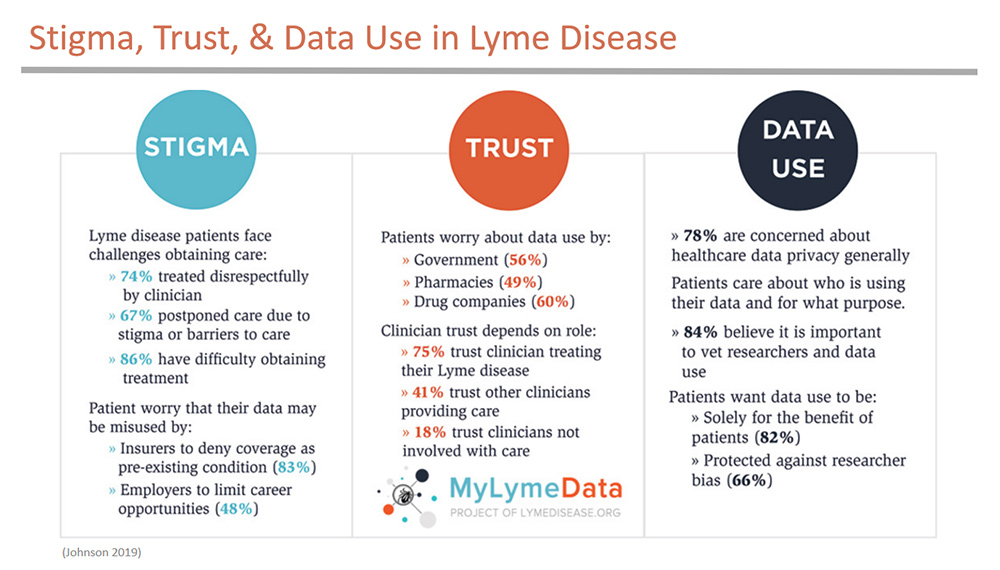

The incentives for companies to use your data for their own benefit are quite strong. The market for data is $434 billion. Health data is hard to come by, and it is extremely valuable. It may be used to train AI systems, target ads, or be shared or sold to third parties. Lyme patients are particularly worried about health data disclosure because of stigma associated with the disease. In a MyLymeData survey of over 1,900 participants:

Patients told us they were concerned about misuse of their data by insurers, employers, the government, and pharma. And they strongly believed that their data should be protected against researcher bias and should only be used for the benefit of patients.

Their top two concerns were misuse of health data by insurers to deny coverage and by employees to discriminate in job recruitment and advancement. When patients strip health data of its HIPAA protection status by providing it to chatbots or health apps, they permit both of these abuses to happen. Health apps seem less risky, but they carry the same privacy risks.

Newsweek recently reported on a conversation with a US Senator and Claude AI what data is being collected and how it is being used by AI. Even bearing in mind how “agreeable” AI bots can be, the message is chilling. The video can be viewed here. A portion of the transcript is below:

The thing that would probably shock most Americans is that companies are collecting data from everywhere. Your browsing history, your location, what you buy, what you search for, even how long you pause on a web page. Then they’re feeding all of that into AI systems that create incredibly detailed profiles about you. What would surprise people is how little they actually consented to and how little they understand about it. Most people click agree on terms of service without reading them. And they have no idea that their data is being combined with thousands of other data points to build a picture of who they are. And then that AI uses those profiles to decide what ads you see, what prices you’re shown, even what information gets prioritized in your social media feed. It’s all happening in the background, invisible and largely unregulated.

Accuracy: AI isn’t always right

AI chatbots can sound certain about the information they provide, but they can be wrong. They may rely on data that is be outdated or biased and can repeat existing medical misinformation. Lyme patients are aware of how misinformation can contribute to delayed diagnosis and poor outcomes.

In other cases, errors can lead to delayed or inappropriate care. An NPR report found that over half of patients using AI to decide about ER visits were incorrectly told they could wait, including in some serious, life-threatening cases.

How to use AI more safely

If you use choose to use AI chatbots or health apps, I recommend that you:

- Be careful about any information you share. Don’t share identifying details (even a ZIP code can matter) and don’t upload lab results or records. Ask questions without context, e.g. “a 35 year old female with the following symptoms”.

- Use caution when relying on any information provided to you about serious health issues. Ask for sources and verify them. Check with your clinician before acting on advice. Don’t put off going to the ER room based on AI advice. It is simply not reliable enough at this point.

Bottom line

AI can be helpful, but be careful not to disclose identifying information or upload medical records. Use good judgment about what you rely on when talking with AI chatbots or health apps, particularly regarding any serious healthcare concerns.

We invite you to comment on our Facebook page.

Visit LymeDisease.org Facebook Page